-

Online & In-Person

AIxBio Hackathon

A weekend hackathon on AI and biosecurity: DNA synthesis screening, pandemic early warning, and practitioner tools.

00:00:00:00

Days To Go

A weekend hackathon on AI and biosecurity: DNA synthesis screening, pandemic early warning, and practitioner tools.

This event is ongoing.

This event has concluded.

567

Sign Ups

Entries

Overview

Resources

Guidelines

Schedule

Entries

Overview

The AIxBio Hackathon brings together researchers, engineers, biosecurity professionals, and AI safety enthusiasts to work on one of the most urgent intersections in safety: how AI is changing biological risk, and what we can build to stay ahead.

Over three days, participants will develop tools, prototypes, and research addressing real gaps in biosecurity infrastructure, from DNA synthesis screening and pandemic early warning systems to practitioner tools that don't exist yet.

Coefficient Giving opened a new biosecurity Request for Proposals and expects to direct over $100M to biosecurity this year. Conor McGurk, Chief of Staff, Biosecurity, is joining us on Thursday, April 23, 2026 at 3pm ET with a 15-minute talk on what their team is prioritizing for funding and what strong Expressions of Interest look like. (Check resources tab for more info)

The hackathon ends Sunday, April 26, 2026. The RFP closes Monday, May 11, 2026 at 11:59pm PT. That leaves two weeks to develop a hackathon idea into a 500-word EOI. See the Resources tab for the example projects Conor highlighted.

Organized by

Apart Research | BlueDot Impact | Cambridge Biosecurity Hub

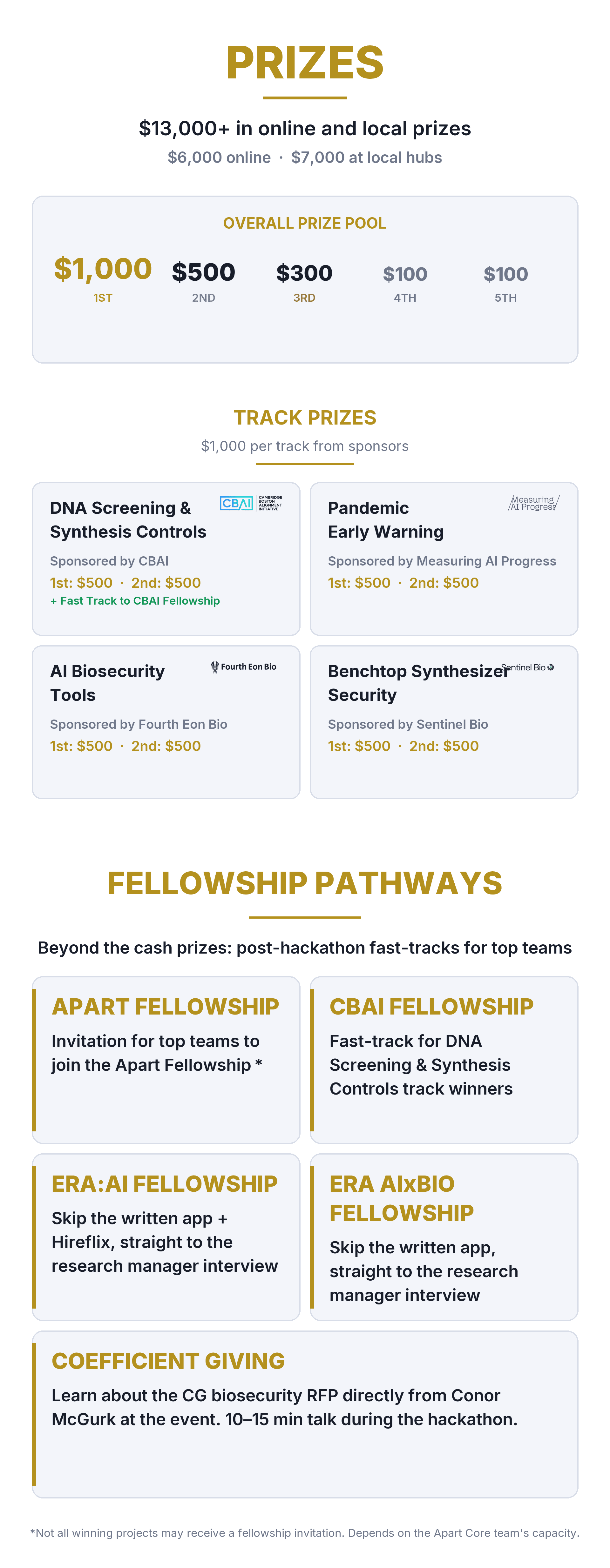

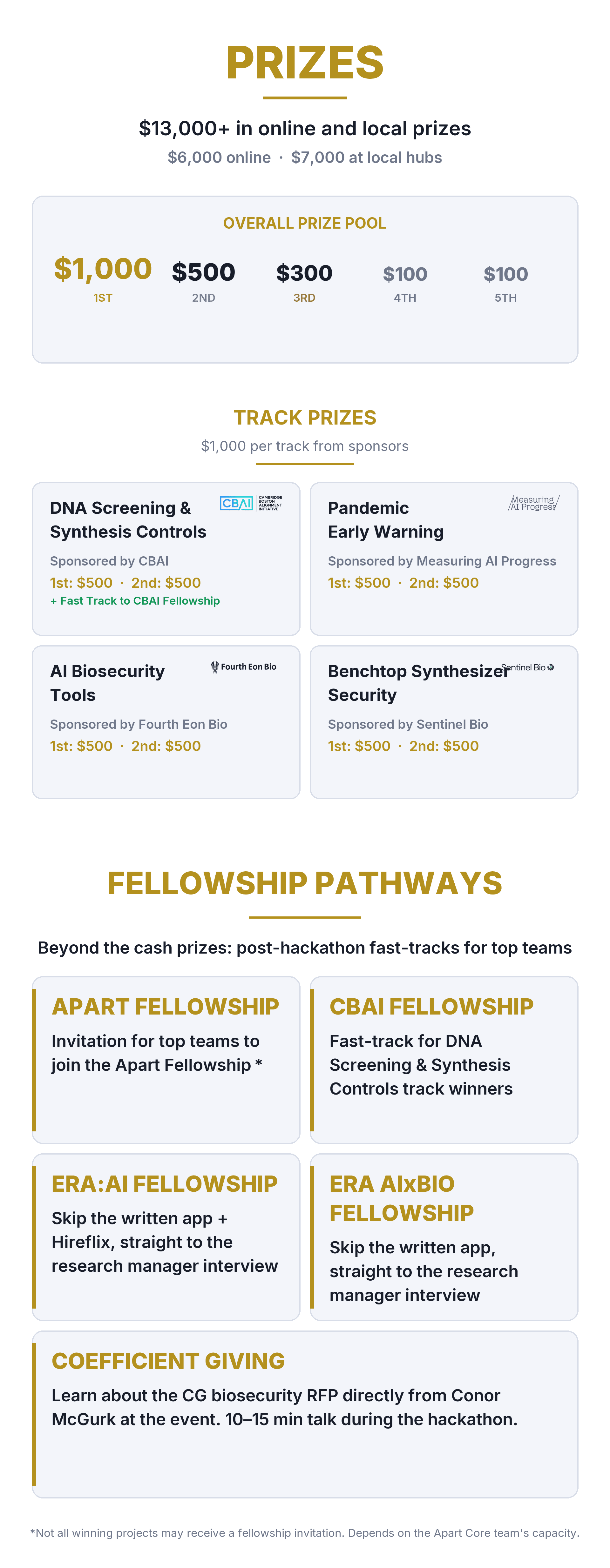

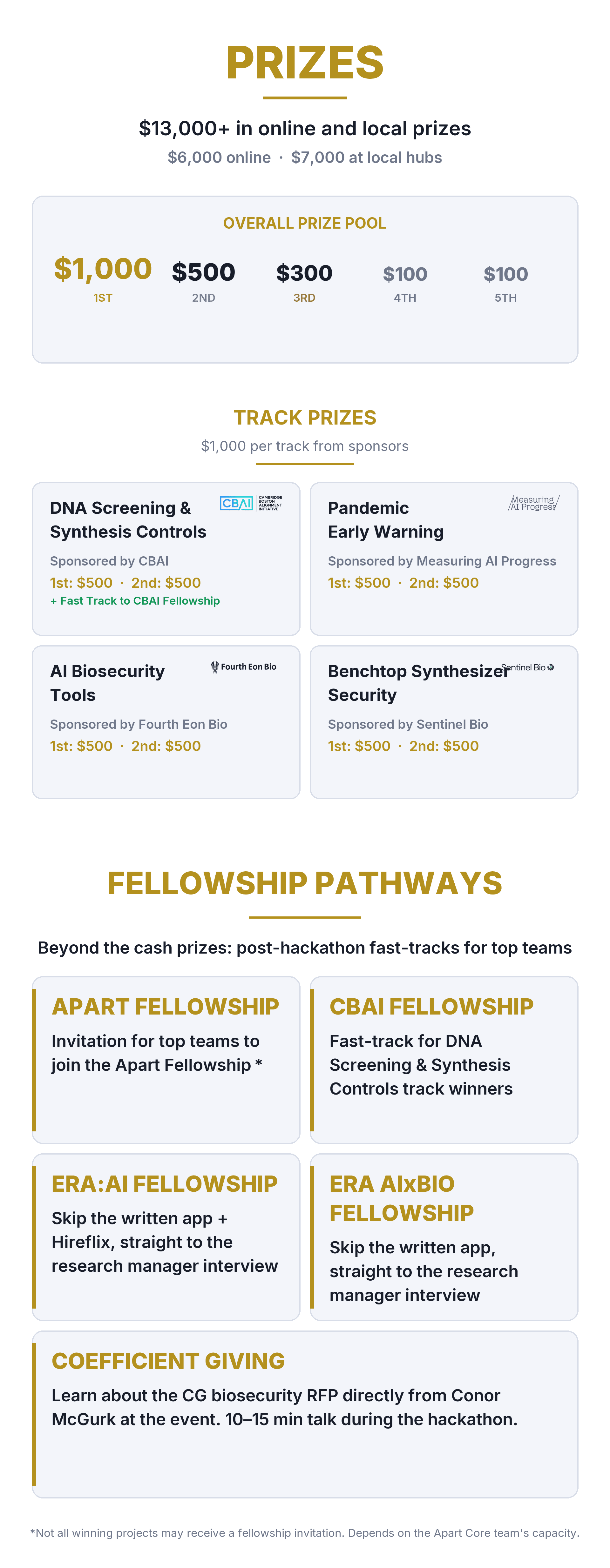

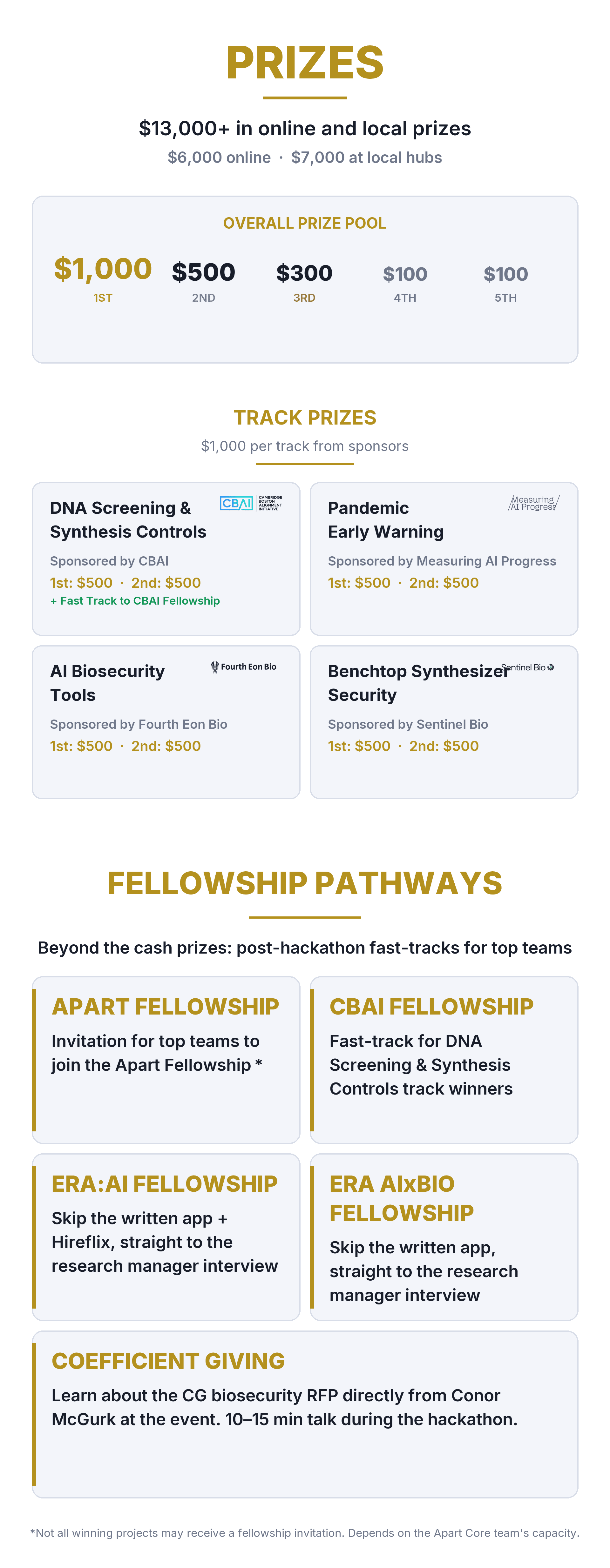

Track sponsors: CBAI (DNA Screening & Synthesis Controls) | Measuring AI Progress (Pandemic Early warning) | Fourth Eon Bio (AI Biosecurity Tools) | Sentinel Bio (Benchtop Synthesizer Security)

What is AIxBio?

AI is transforming biology faster than biosecurity can keep up. Open-weight biological foundation models like Evo2 (a DNA language model, 40B parameters, trained on 128,000+ genomes) and protein design tools like RFdiffusion are making it possible to design novel biological sequences with less expertise than ever before. At the same time, benchtop DNA synthesizers are approaching the capability to print virus-length sequences (expected within 2-5 years), and current screening infrastructure covers only a fraction of global synthesis capacity.

On the defensive side, pathogen-agnostic metagenomic surveillance is finally becoming practical. Wastewater monitoring can detect outbreaks weeks before hospitals see cases. AI anomaly detection could identify engineered pathogens in sequencing data. But most of these tools are siloed, under-resourced, or still in the research phase. The field needs builders.

Hackathon Tracks

1. DNA Screening & Synthesis Controls (sponsored by CBAI)

Build better tools for screening DNA synthesis orders from commercial providers, detecting dangerous sequences, and creating guardrails for AI-powered biological design tools. Current screening misses AI-designed protein variants and struggles with short sequences. For projects focused on benchtop synthesizer security, see Track 4.

2. Pandemic Early Warning (sponsored by Measuring AI Progress)

Develop AI-powered surveillance, anomaly detection, and data integration tools for catching outbreaks earlier. Wastewater monitoring, metagenomic sequencing, and open-source disease intelligence are all ripe for better tooling.

3. AI Biosecurity Tools (sponsored by Fourth Eon Bio)

Build the tools that biosecurity practitioners actually need: unified dashboards, rapid risk assessment systems, policy trackers, secure communication tools, and accessible resources for under-resourced institutions.

4. Benchtop Synthesizer Security (sponsored by Sentinel Bio)

Build security infrastructure for benchtop DNA synthesizers before they reach dangerous capability thresholds: phone-home screening, tamper-proof hardware, split order detection, and customer verification. No mandatory screening exists today, but US and EU legislative momentum is opening a window to change this.

Who should participate?

AI safety researchers and engineers

Biosecurity and public health professionals

Machine learning researchers interested in biological applications

Security researchers and red teamers

Policy researchers working on biogovernance

Software engineers interested in building safety infrastructure

Students and early-career researchers exploring biosecurity

No prior biosecurity experience is required. The Resources tab has a curated reading list organized by track. Teams typically form at the start of the event.

What you will do

Over three days, you will:

Form teams and choose a challenge track

Research and scope a specific problem using the provided resources

Build a project: a tool, prototype, evaluation, or research contribution

Submit a research report (PDF) documenting your approach, results, and implications

Have your work reviewed by judges from leading biosecurity and AI safety organizations

What happens next

After the hackathon, all submitted projects will be reviewed by expert judges. Top projects receive prizes and are featured on the Apart Research website. Outstanding work may be invited for further development, publication, or presentation at upcoming events.

Why join?

Apart Research has organized 55+ research sprints with 7,000+ participants across 200+ global locations. Co-organized with BlueDot Impact and Cambridge Biosecurity Hub, with track sponsorship from CBAI, Sentinel Bio, Fourth Eon Bio, and Measuring AI Progress. Our hackathons produce real research output: published papers, new research collaborations, and contributions to open-source safety tools used across the field.

567

Sign Ups

Entries

Overview

Resources

Guidelines

Schedule

Entries

Overview

The AIxBio Hackathon brings together researchers, engineers, biosecurity professionals, and AI safety enthusiasts to work on one of the most urgent intersections in safety: how AI is changing biological risk, and what we can build to stay ahead.

Over three days, participants will develop tools, prototypes, and research addressing real gaps in biosecurity infrastructure, from DNA synthesis screening and pandemic early warning systems to practitioner tools that don't exist yet.

Coefficient Giving opened a new biosecurity Request for Proposals and expects to direct over $100M to biosecurity this year. Conor McGurk, Chief of Staff, Biosecurity, is joining us on Thursday, April 23, 2026 at 3pm ET with a 15-minute talk on what their team is prioritizing for funding and what strong Expressions of Interest look like. (Check resources tab for more info)

The hackathon ends Sunday, April 26, 2026. The RFP closes Monday, May 11, 2026 at 11:59pm PT. That leaves two weeks to develop a hackathon idea into a 500-word EOI. See the Resources tab for the example projects Conor highlighted.

Organized by

Apart Research | BlueDot Impact | Cambridge Biosecurity Hub

Track sponsors: CBAI (DNA Screening & Synthesis Controls) | Measuring AI Progress (Pandemic Early warning) | Fourth Eon Bio (AI Biosecurity Tools) | Sentinel Bio (Benchtop Synthesizer Security)

What is AIxBio?

AI is transforming biology faster than biosecurity can keep up. Open-weight biological foundation models like Evo2 (a DNA language model, 40B parameters, trained on 128,000+ genomes) and protein design tools like RFdiffusion are making it possible to design novel biological sequences with less expertise than ever before. At the same time, benchtop DNA synthesizers are approaching the capability to print virus-length sequences (expected within 2-5 years), and current screening infrastructure covers only a fraction of global synthesis capacity.

On the defensive side, pathogen-agnostic metagenomic surveillance is finally becoming practical. Wastewater monitoring can detect outbreaks weeks before hospitals see cases. AI anomaly detection could identify engineered pathogens in sequencing data. But most of these tools are siloed, under-resourced, or still in the research phase. The field needs builders.

Hackathon Tracks

1. DNA Screening & Synthesis Controls (sponsored by CBAI)

Build better tools for screening DNA synthesis orders from commercial providers, detecting dangerous sequences, and creating guardrails for AI-powered biological design tools. Current screening misses AI-designed protein variants and struggles with short sequences. For projects focused on benchtop synthesizer security, see Track 4.

2. Pandemic Early Warning (sponsored by Measuring AI Progress)

Develop AI-powered surveillance, anomaly detection, and data integration tools for catching outbreaks earlier. Wastewater monitoring, metagenomic sequencing, and open-source disease intelligence are all ripe for better tooling.

3. AI Biosecurity Tools (sponsored by Fourth Eon Bio)

Build the tools that biosecurity practitioners actually need: unified dashboards, rapid risk assessment systems, policy trackers, secure communication tools, and accessible resources for under-resourced institutions.

4. Benchtop Synthesizer Security (sponsored by Sentinel Bio)

Build security infrastructure for benchtop DNA synthesizers before they reach dangerous capability thresholds: phone-home screening, tamper-proof hardware, split order detection, and customer verification. No mandatory screening exists today, but US and EU legislative momentum is opening a window to change this.

Who should participate?

AI safety researchers and engineers

Biosecurity and public health professionals

Machine learning researchers interested in biological applications

Security researchers and red teamers

Policy researchers working on biogovernance

Software engineers interested in building safety infrastructure

Students and early-career researchers exploring biosecurity

No prior biosecurity experience is required. The Resources tab has a curated reading list organized by track. Teams typically form at the start of the event.

What you will do

Over three days, you will:

Form teams and choose a challenge track

Research and scope a specific problem using the provided resources

Build a project: a tool, prototype, evaluation, or research contribution

Submit a research report (PDF) documenting your approach, results, and implications

Have your work reviewed by judges from leading biosecurity and AI safety organizations

What happens next

After the hackathon, all submitted projects will be reviewed by expert judges. Top projects receive prizes and are featured on the Apart Research website. Outstanding work may be invited for further development, publication, or presentation at upcoming events.

Why join?

Apart Research has organized 55+ research sprints with 7,000+ participants across 200+ global locations. Co-organized with BlueDot Impact and Cambridge Biosecurity Hub, with track sponsorship from CBAI, Sentinel Bio, Fourth Eon Bio, and Measuring AI Progress. Our hackathons produce real research output: published papers, new research collaborations, and contributions to open-source safety tools used across the field.

567

Sign Ups

Entries

Overview

Resources

Guidelines

Schedule

Entries

Overview

The AIxBio Hackathon brings together researchers, engineers, biosecurity professionals, and AI safety enthusiasts to work on one of the most urgent intersections in safety: how AI is changing biological risk, and what we can build to stay ahead.

Over three days, participants will develop tools, prototypes, and research addressing real gaps in biosecurity infrastructure, from DNA synthesis screening and pandemic early warning systems to practitioner tools that don't exist yet.

Coefficient Giving opened a new biosecurity Request for Proposals and expects to direct over $100M to biosecurity this year. Conor McGurk, Chief of Staff, Biosecurity, is joining us on Thursday, April 23, 2026 at 3pm ET with a 15-minute talk on what their team is prioritizing for funding and what strong Expressions of Interest look like. (Check resources tab for more info)

The hackathon ends Sunday, April 26, 2026. The RFP closes Monday, May 11, 2026 at 11:59pm PT. That leaves two weeks to develop a hackathon idea into a 500-word EOI. See the Resources tab for the example projects Conor highlighted.

Organized by

Apart Research | BlueDot Impact | Cambridge Biosecurity Hub

Track sponsors: CBAI (DNA Screening & Synthesis Controls) | Measuring AI Progress (Pandemic Early warning) | Fourth Eon Bio (AI Biosecurity Tools) | Sentinel Bio (Benchtop Synthesizer Security)

What is AIxBio?

AI is transforming biology faster than biosecurity can keep up. Open-weight biological foundation models like Evo2 (a DNA language model, 40B parameters, trained on 128,000+ genomes) and protein design tools like RFdiffusion are making it possible to design novel biological sequences with less expertise than ever before. At the same time, benchtop DNA synthesizers are approaching the capability to print virus-length sequences (expected within 2-5 years), and current screening infrastructure covers only a fraction of global synthesis capacity.

On the defensive side, pathogen-agnostic metagenomic surveillance is finally becoming practical. Wastewater monitoring can detect outbreaks weeks before hospitals see cases. AI anomaly detection could identify engineered pathogens in sequencing data. But most of these tools are siloed, under-resourced, or still in the research phase. The field needs builders.

Hackathon Tracks

1. DNA Screening & Synthesis Controls (sponsored by CBAI)

Build better tools for screening DNA synthesis orders from commercial providers, detecting dangerous sequences, and creating guardrails for AI-powered biological design tools. Current screening misses AI-designed protein variants and struggles with short sequences. For projects focused on benchtop synthesizer security, see Track 4.

2. Pandemic Early Warning (sponsored by Measuring AI Progress)

Develop AI-powered surveillance, anomaly detection, and data integration tools for catching outbreaks earlier. Wastewater monitoring, metagenomic sequencing, and open-source disease intelligence are all ripe for better tooling.

3. AI Biosecurity Tools (sponsored by Fourth Eon Bio)

Build the tools that biosecurity practitioners actually need: unified dashboards, rapid risk assessment systems, policy trackers, secure communication tools, and accessible resources for under-resourced institutions.

4. Benchtop Synthesizer Security (sponsored by Sentinel Bio)

Build security infrastructure for benchtop DNA synthesizers before they reach dangerous capability thresholds: phone-home screening, tamper-proof hardware, split order detection, and customer verification. No mandatory screening exists today, but US and EU legislative momentum is opening a window to change this.

Who should participate?

AI safety researchers and engineers

Biosecurity and public health professionals

Machine learning researchers interested in biological applications

Security researchers and red teamers

Policy researchers working on biogovernance

Software engineers interested in building safety infrastructure

Students and early-career researchers exploring biosecurity

No prior biosecurity experience is required. The Resources tab has a curated reading list organized by track. Teams typically form at the start of the event.

What you will do

Over three days, you will:

Form teams and choose a challenge track

Research and scope a specific problem using the provided resources

Build a project: a tool, prototype, evaluation, or research contribution

Submit a research report (PDF) documenting your approach, results, and implications

Have your work reviewed by judges from leading biosecurity and AI safety organizations

What happens next

After the hackathon, all submitted projects will be reviewed by expert judges. Top projects receive prizes and are featured on the Apart Research website. Outstanding work may be invited for further development, publication, or presentation at upcoming events.

Why join?

Apart Research has organized 55+ research sprints with 7,000+ participants across 200+ global locations. Co-organized with BlueDot Impact and Cambridge Biosecurity Hub, with track sponsorship from CBAI, Sentinel Bio, Fourth Eon Bio, and Measuring AI Progress. Our hackathons produce real research output: published papers, new research collaborations, and contributions to open-source safety tools used across the field.

567

Sign Ups

Entries

Overview

Resources

Guidelines

Schedule

Entries

Overview

The AIxBio Hackathon brings together researchers, engineers, biosecurity professionals, and AI safety enthusiasts to work on one of the most urgent intersections in safety: how AI is changing biological risk, and what we can build to stay ahead.

Over three days, participants will develop tools, prototypes, and research addressing real gaps in biosecurity infrastructure, from DNA synthesis screening and pandemic early warning systems to practitioner tools that don't exist yet.

Coefficient Giving opened a new biosecurity Request for Proposals and expects to direct over $100M to biosecurity this year. Conor McGurk, Chief of Staff, Biosecurity, is joining us on Thursday, April 23, 2026 at 3pm ET with a 15-minute talk on what their team is prioritizing for funding and what strong Expressions of Interest look like. (Check resources tab for more info)

The hackathon ends Sunday, April 26, 2026. The RFP closes Monday, May 11, 2026 at 11:59pm PT. That leaves two weeks to develop a hackathon idea into a 500-word EOI. See the Resources tab for the example projects Conor highlighted.

Organized by

Apart Research | BlueDot Impact | Cambridge Biosecurity Hub

Track sponsors: CBAI (DNA Screening & Synthesis Controls) | Measuring AI Progress (Pandemic Early warning) | Fourth Eon Bio (AI Biosecurity Tools) | Sentinel Bio (Benchtop Synthesizer Security)

What is AIxBio?

AI is transforming biology faster than biosecurity can keep up. Open-weight biological foundation models like Evo2 (a DNA language model, 40B parameters, trained on 128,000+ genomes) and protein design tools like RFdiffusion are making it possible to design novel biological sequences with less expertise than ever before. At the same time, benchtop DNA synthesizers are approaching the capability to print virus-length sequences (expected within 2-5 years), and current screening infrastructure covers only a fraction of global synthesis capacity.

On the defensive side, pathogen-agnostic metagenomic surveillance is finally becoming practical. Wastewater monitoring can detect outbreaks weeks before hospitals see cases. AI anomaly detection could identify engineered pathogens in sequencing data. But most of these tools are siloed, under-resourced, or still in the research phase. The field needs builders.

Hackathon Tracks

1. DNA Screening & Synthesis Controls (sponsored by CBAI)

Build better tools for screening DNA synthesis orders from commercial providers, detecting dangerous sequences, and creating guardrails for AI-powered biological design tools. Current screening misses AI-designed protein variants and struggles with short sequences. For projects focused on benchtop synthesizer security, see Track 4.

2. Pandemic Early Warning (sponsored by Measuring AI Progress)

Develop AI-powered surveillance, anomaly detection, and data integration tools for catching outbreaks earlier. Wastewater monitoring, metagenomic sequencing, and open-source disease intelligence are all ripe for better tooling.

3. AI Biosecurity Tools (sponsored by Fourth Eon Bio)

Build the tools that biosecurity practitioners actually need: unified dashboards, rapid risk assessment systems, policy trackers, secure communication tools, and accessible resources for under-resourced institutions.

4. Benchtop Synthesizer Security (sponsored by Sentinel Bio)

Build security infrastructure for benchtop DNA synthesizers before they reach dangerous capability thresholds: phone-home screening, tamper-proof hardware, split order detection, and customer verification. No mandatory screening exists today, but US and EU legislative momentum is opening a window to change this.

Who should participate?

AI safety researchers and engineers

Biosecurity and public health professionals

Machine learning researchers interested in biological applications

Security researchers and red teamers

Policy researchers working on biogovernance

Software engineers interested in building safety infrastructure

Students and early-career researchers exploring biosecurity

No prior biosecurity experience is required. The Resources tab has a curated reading list organized by track. Teams typically form at the start of the event.

What you will do

Over three days, you will:

Form teams and choose a challenge track

Research and scope a specific problem using the provided resources

Build a project: a tool, prototype, evaluation, or research contribution

Submit a research report (PDF) documenting your approach, results, and implications

Have your work reviewed by judges from leading biosecurity and AI safety organizations

What happens next

After the hackathon, all submitted projects will be reviewed by expert judges. Top projects receive prizes and are featured on the Apart Research website. Outstanding work may be invited for further development, publication, or presentation at upcoming events.

Why join?

Apart Research has organized 55+ research sprints with 7,000+ participants across 200+ global locations. Co-organized with BlueDot Impact and Cambridge Biosecurity Hub, with track sponsorship from CBAI, Sentinel Bio, Fourth Eon Bio, and Measuring AI Progress. Our hackathons produce real research output: published papers, new research collaborations, and contributions to open-source safety tools used across the field.

Speakers & Collaborators

Kevin Esvelt

Keynote

Kevin M. Esvelt is an associate professor at the MIT Media Lab, where he directs the Sculpting Evolution Group. In 2013, he was the first to identify the potential for CRISPR gene drive systems to alter wild populations, and he broke with scientific tradition by calling for open discussion and safeguards before any lab demonstrated the technology. His current work focuses on catastrophic biorisk mitigation: he co-founded SecureDNA, a cryptographic platform for universal DNA synthesis screening, and helped develop the Nucleic Acid Observatory, a global early-warning system for biological threats.

Oliver Crook

Keynote

Dr. Oliver Crook is an MRC Career Development Award Fellow and Group Leader at the Kavli Institute for NanoScience Discovery, University of Oxford, and a Todd-Bird Junior Research Fellow at New College. His group develops computational and statistical methods drawing on Bayesian machine learning, structural biology, and AI interpretability, with applications in molecular parasitology, protein design, and biosecurity. Before starting his group in 2025, he was seconded to the UK Government's AI Safety Institute to lead a protein design team.

Jaime Yassif

Keynote

Dr. Jaime Yassif is Senior Advisor for Global Biological Policy and Programs at NTI | bio, where she focuses on governance and safeguards at the intersection of AI and the life sciences. She previously served as Vice President of NTI | bio, overseeing the organization's work to reduce global catastrophic biological risks, and before that as the inaugural Biosecurity and Pandemic Preparedness Program Officer at Open Philanthropy, where she directed approximately $40 million in grants that helped build the modern biosecurity field. Dr. Yassif holds a Ph.D. in Biophysics from UC Berkeley and has advised the U.S. Department of Defense and Department of Health and Human Services on biosecurity policy.

Conor McGurk

Speaker

Conor McGurk is Chief of Staff for Biosecurity & Pandemic Preparedness at Coefficient Giving (formerly Open Philanthropy), where he works on grantmaking strategy across one of the largest philanthropic funders of global catastrophic risk work. Before joining Coefficient Giving in 2025, he co-founded and led the Safe AI Forum, an AI safety nonprofit that organized the International Dialogues on AI Safety series, bringing together Turing Award winners, former heads of state, and leading AI scientists to build consensus on global AI governance. He previously held roles in engineering management at Meta and technology strategy consulting at Deloitte, and holds a BSc in Computer Science and Philosophy from the University of British Columbia

Cassidy Nelson

Speaker

Dr. Cassidy Nelson is the Director of Biosecurity Policy at the Centre for Long-Term Resilience (CLTR), leading a team working to safeguard against pandemics and address extreme biological risks through targeted policy engagement. A dual-trained physician-scientist, she holds a DPhil in Biology from Oxford, an MPH from the University of Melbourne, and a Medical Degree from the University of Queensland. Before CLTR, she spent a decade as a clinician and biosecurity researcher at Oxford, and has advised the WHO and the Bipartisan Commission on Biodefense on health security policy.

Coleman Breen

Speaker

Coleman Breen is a Fellow at the Johns Hopkins Center for Health Security and a Senior AI Policy Researcher at SecureBio, where he works on biosecurity-relevant AI evaluations and policy. He is a PhD candidate in Statistics at UW-Madison, with research focused on the statistical evaluation of genetic sequence machine learning models. His background spans computational biology, AI, and a stint as an AI resident at Google[x] working on agricultural biotechnology.

Gene Olinger

Speaker

Gene Olinger, PhD, MBA, is the Director of the Galveston National Laboratory and Professor of Microbiology and Immunology at the University of Texas Medical Branch, where he holds the John Sealy Distinguished University Chair in Tropical and Emerging Virology. He brings over 20 years of BSL-3/4 research experience, with a focus on vaccine and therapeutic development for Ebola and other high-consequence emerging pathogens. Before joining UTMB, he served at USAMRIID and NIH NIAID, where he was a key contributor to the development of ZMapp, the Ebola treatment deployed during the West Africa outbreak.

James Black

Speaker

Dr. James Black is a Contributing Scholar at the Johns Hopkins Center for Health Security, where he focuses on technical assessments of AI-enabled biological risks. He trained as a medical doctor at the University of Oxford and Imperial College London, earned his PhD in computational cancer biology from University College London, and completed a postdoctoral fellowship at the Francis Crick Institute.

Jonas Sandbrink

Speaker and Judge

Jonas Sandbrink is Entrepreneur-in-Residence at Sentinel Bio, where he works on researcher credentialing for access to bio-capable AI systems. Previously at the UK AI Security Institute, where he founded the chem/bio evaluations team, the first government program anywhere to assess whether frontier AI models could enable biological or chemical misuse. Before AISI, he advised the UK Cabinet Office and Google DeepMind on biosecurity. He holds a DPhil from Oxford's Nuffield Department of Medicine, where his research examined misuse risks from synthetic virology and AI.

Steph Guerra

Speaker

Dr. Steph Guerra is a molecular biologist and biosecurity expert serving as Senior Biosecurity Research Resident and AIxBio Lead at the RAND Center on AI, Security, and Technology, where she researches the convergence of AI and biotechnology to advance security and the public good. Prior to RAND, she was Head of Strategic Partnerships at the U.S. AI Safety Institute at NIST, where she led efforts to evaluate the national security risks of advanced AI systems, and earlier worked at the White House Office of Science and Technology Policy on biodefense strategy and policy. She holds a PhD in biological and biomedical sciences from Harvard University.

Alex Kleinman

Speaker

Alex Kleinman is Co-founder and Director of Operations at Active Site (formerly Panoplia Labs), a research nonprofit measuring AI's impact on biosecurity risks. He co-led the wet-lab RCT on whether LLMs provide biological uplift to novices, contributed to ABC-Bench (an agentic biosecurity benchmark presented at NeurIPS 2025), and co-authored a study on antibiotic and vaccine countermeasures against mirror bacteria. Before Active Site, he was on the science team at Alvea, working on broad-spectrum vaccines.

Jasper Götting

Judge

Jasper Götting is Head of AI Research at SecureBio where his work covers aspects of understanding, measuring, and mitigating the effects of AI progress on biological risks. Before joining SecureBio, Jasper worked on airborne transmission suppression at Convergent Research and pathogen-agnostic diagnostics for the Ellison Institute of Technology Oxford. He holds a Masters in Biomedical Science and a PhD in Virology from the Hannover Medical School.

Jason Hoelscher-Obermaier

Organizer and Judge

Director of Research at Apart Research. Ph.D. in physics from Vienna. Focuses on AI safety evaluations, interpretability, and alignment.

Grace Braithwaite

Organizer

Dr. Grace Braithwaite is Co-Founder and Director of the Cambridge Biosecurity Hub (cambiohub.org), where she leads the organisation's work building the biosecurity research community in Cambridge and beyond. A medical doctor by training, she completed two years of NHS Foundation training across General Surgery, Cardiology, Endocrinology, A&E and General Practice, alongside a year as a part-time locum. She holds an MBChB and a Master of Public Health (Merit) from the University of Sheffield, with field research on health-system resilience after the 2015 Nepal earthquake and on child nutrition programmes in Bangladesh with World Vision.

Joshua Landes

Organizer

Joshua Landes leads Community & Training at BlueDot Impact, where he runs the AISF community and facilitates AI Governance and Economics of Transformative AI courses. Previously, he worked at AI Safety Germany and the Center for AI Safety, after managing political campaigns for FDP in Germany.

Jack Burgess

Organizer and Judge

Dr. Jack Burgess completed his Ph.D. in Neural Computation at Carnegie Mellon University in 2025 before moving to Berlin, Germany. Having worked broadly on scientific applications of AI and machine learning throughout his academic training, as well as having published in the area of computational biology, he is delighted to be leading the Berlin hub's local AIxBio Hackathon. He is currently on the lookout for new opportunities: reach out if your team is growing!

Akshay Iyer

Judge and Co-organizer

CS and Entrepreneurship at Columbia University, IIT Bombay alum. Research experience in neuromorphic engineering and federated learning. Apart Research judge and internal organizer.

Adam Howes

Judge

Adam Howes is a statistician currently working with CDC CFA on epidemic surveillance and Active Site on studies evaluating AI assistance for biological tasks. He holds a PhD in statistics from Imperial College London

Alex Norman

Judge

Alex completed his PhD in Interdisciplinary Bioscience at Oxford and has over 7 years of experience in immunology, bioinformatics and virology. He has worked on broad-spectrum antivirals and vaccines as well as performed epidemiology work during COVID-19. He has worked across biosecurity disciplines on technical projects with Sentinel Bio, SecureBio and the Mirror Biology Dialogues.

Andrew Liu

Judge

Andrew is a Senior Research Scientist at SecureBio, where he has led or contributed to six AI biosecurity benchmarks including those used by OpenAI, Anthropic, and Google DeepMind. Previously, he earned his PhD in computational biology at Harvard Medical School and his BA/MS in math and computer science at Harvard, and has published scientific papers on early pandemic detection, DNA synthesis screening, and genetic engineering attribution.

Andy Morgan

Judge

Andy is a senior policy advisor at the Ministry of Business, Innovation and Employment in New Zealand. His role focuses on the regulation of biotechnology and he has recently represented the New Zealand government internationally on the topic of nucleic acid synthesis screening. Andy was an ERA AIxBio Research Fellow and recently launched the AIxBio Research Hub to help new researchers familiarize themselves with the field.

Benjamin Fefferman

Judge

Benjamin is a technical AI safety researcher, and is compelled to apply his interdisciplinary knowledge of computer science, biology, and chemistry to ensuring that the AI revolution is beneficial for humanity. Since his graduate studies in bioinformatics and genomics at Harvard, and machine learning research at MIT, he has led research pertaining to DNA synthesis screening at the AIxBiosecurity fellowship in Cambridge, UK.

Bryan Tegomoh

Judge

Bryan Tegomoh, MD, MPH, is a physician and epidemiologist with experience in AI, biosecurity, and public health. Author of the Biosecurity Handbook: Biological Security in the AI Era. He led the investigation of the first reported U.S. Omicron variant cluster (early warning system) and has served as a WHO Technical Consultant on Epidemic and Pandemic Preparedness. He works on AI safety in biosecurity and responsible AI in medicine and public health.

Chris Stamper

Judge

Chris is a biosecurity researcher focused on reducing risks from catastrophic biological threats, particularly novel threats emerging from the overlap of AI, biotechnology, and synthetic biology. He brings deep hands-on expertise in immunology, virology, computational biology, and machine learning to evaluating frontier AI capabilities, modeling novel biosecurity threats, and building the field of AIxBio. He has a PhD in immunology from the University of Chicago.

Christine Parthemore

Judge

Christine Parthemore is Director of CSR's Janne E. Nolan Center on Strategic Weapons at the Council on Strategic Risks, where she previously served as CEO for six years and was a founding Board Member. Her current work covers issues in biosecurity and biodefense, countering weapons of mass destruction, innovation and technology trends in national security, and more. She also serves on the Board of SecureBio. Among her prior roles, Christine served as the Senior Advisor to the Assistant Secretary of Defense for Nuclear, Chemical, and Biological Defense Programs in the U.S. Department of Defense.

Elian Belot

Judge

Elian Belot is a founding engineer at Serova Bio, where he builds AI and computational biology systems for personalized cancer vaccines. He previously worked on gene editing and protein design.

Elise Racine

Judge

Elise Racine is a Research Manager at MATS and a PhD candidate and Clarendon Scholar at the University of Oxford, affiliated with the Institute for Ethics in AI and the Ethox Centre. Her work focuses on AI x biosecurity risks, including AI misuse in biological threat development and the governance of dual-use systems. She has contributed to pandemic surveillance and response efforts across multiple capacities and has advised organizations such as the United Nations and U.S. government agencies on issues spanning AI safety, global health security, and policy/governance.

Eva Siegmann

Judge

Eva Siegmann is a Visiting Fellow at the Council on Strategic Risks, where she works on international biodefense initiatives and US biosecurity policy. She previously worked as a Political Affairs Intern at the Biological Weapons Convention Implementation Support Unit in Geneva and at several research institutes, including the Institute for Peace Research and Security Policy at the University of Hamburg, the Fondation pour la Recherche Stratégique and the Nuclear Threat Initiative. She holds an MA in Security Studies from Georgetown University and a Double BA in social and political science from Sciences Po Paris and the Free University of Berlin.

Evan Fields

Judge

Evan Fields is a Research Scientist at SecureBio, where he works on pathogen detection and pandemic early warning systems. He was recently selected for the 2025-26 Fellowship for Ending Bioweapons Programs at the Council on Strategic Risks. Before SecureBio, he was VP of Engineering and Data Science at Zoba, an urban mobility software company, where he had previously led the data science team. He holds a PhD in Operations Research from MIT, with research on estimating latent demand for urban mobility services, and a BA in Mathematics and Computer Science from Pomona College.

Gary Ackerman

Judge

Dr. Gary Ackerman is the CEO and Founder of Nemesys Insights. He has over two decades of expertise in strategic forecasting, risk assessment and human behavior modeling. He concurrently serves as a Professor of Homeland Security at the University at Albany, SUNY. Much of his recent work has applied his red teaming and risk evaluation methodologies to explore risk at the intersection of AI and CBRN, conducting over a dozen risk evaluations and mitigations of frontier AI models in these domains.

Hanna Palya

Judge

Hanna is a PhD student in mathematical epidemiology, working on developing syndromic surveillance into an early warning system for outbreaks with the UK Health Security Agency and the University of Warwick. She is also a research fellow at Fourth Eon Bio, working on function-based sequence screening. In the past three months, she worked on AI-bio threat modelling as a seasonal fellow at GovAI. In the past year, she helped build a free KYC-tool for DNA providers and scoped a defense-funded R&D programme for function-based sequence screening at CLTR.

Hayley Severance

Judge

Hayley Anne Severance is the deputy vice president for NTI's Global Biological Policy and Programs team, bringing 15+ years of experience in biosecurity, biological threat reduction, and global health security policy. Prior to NTI, she developed strategic policy for the Department of Defense's Cooperative Biological Engagement Program and advanced the U.S. commitment under the Global Health Security Agenda. She holds an MPH in Infectious Disease Epidemiology from George Washington University and is an alumna of the Emerging Leaders in Biosecurity Initiative Fellowship.

Ian Beatty

Judge

Ian Beatty, Associate Professor of Physics at UNC Greensboro, has a long history of exploring and teaching computational modeling. He recently pivoted into biosecurity R&D and completed a research fellowship in the ERA AIxBio program in Cambridge, England. He is currently working on DNA synthesis screening.

Ihor Kendiukhov

Judge

Ihor is a researcher at the intersection of AI Safety and AI for biology. He is the founder of BiodynAI, a project to apply mechanistic interpretability to biological foundation models. He is also research lead at AI Safety Camp and SPAR.

Jack Douglass

Judge

Jack is a computational biologist and undergraduate at the University of Arizona. Currently, he designs molecular diagnostics at Strive Bioscience, an early-stage startup. Hes built bioinformatics software for SecureBio and worked on synthesis screening standards as an ERA Fellow. His research interests include molecular diagnostics, function-based synthesis screening, and transmission suppression technologies.

Joey Bream

Judge

Joey Bream is the Founder and CEO of Bream Design, a software agency that builds backend systems for charities. He also founded the Automation Builders Club, which runs coding events in London. Previously, he spent two years as Operations Assistant at the Krueger AI Safety Lab (KASL) at the University of Cambridge, one of the UK's most influential AI safety research groups. He holds a Master of Engineering in Energy Sustainability and the Environment from Cambridge, where his master's project developed a data model for optimising carbon emissions.

John O'Brien

Judge

John "JT" OBrien is the Associate Director of Research at the Atlantic Councils Bipartisan Commission on Biodefense and a DPhil candidate in mathematical biology at the University of Oxford. His work focuses on the critical intersection of artificial intelligence, biosecurity, and the life sciences, bridging the gap between scientific innovation and national policy.

John Wang

Judge

Ph.D. from Harvard. Chief Scientific Officer at TwentyTwo. Research focuses on AI-biosecurity, viral evolution, and developing interpretable machine learning tools for biological applications.

Keri Warr

Judge

Keri is a Security Engineering Manager at Anthropic, where hes spent over three years helping build the security organization from the ground up. He also advises external security researchers developing AI tools to secure the open source supply chain and has published research on methods for protecting model weights from sophisticated adversaries.

Kevin Flyangolts

Judge

Kevin Flyangolts is Founder and CEO of Aclid, a biosecurity and biosafety platform. Leading life sciences institutions use Aclids platform for denied party screening, KYC, trade compliance, and risk intelligence for complex, obfuscated, and AI-generated designs. Building on its commercial screening technology, Aclid also works with US and UK governments in early-warning, agnostic biothreat intelligence including handheld and deployed, continuous sequencing.

Laura Bailey

Judge

Laura is completing her PhD in Medical Sciences at the University of Oxford, where her research examines the regulation of innate immunity. During her PhD, she gained policy experience through an internship at the Academy of Medical Sciences. Previously, she completed an integrated Masters in molecular and cellular biochemistry, also at Oxford, researching bacterial genomics and virulence. She is now pivoting into biosecurity policy, focusing on how AI is shaping the life sciences and how progress trades off against risk. Most recently she was a Winter 2026 ERA AIxBio Fellow, researching AI-enabled biosurveillance.

Lee M. Wall

Judge

Alumnus of the ERA AIxBio Fellowship, where he researched tamper-resistant safeguards for open-weight biological foundation models. 3+ years of experience in data science for CBRN risk assessment.

Lennart Justen

Judge

Lennart Justen is a PhD candidate at MIT and the Broad Institute where he is co-advised by Kevin Esvelt and Pardis Sabeti. His work focuses on biosecurity and pandemic preparedness, with a particular focus on pandemic early warning systems and safeguards for dual use AIxBio systems. He has previously worked on biosecurity at SecureBio, the Council on Strategic Risks, and the U.S. State Department.

Leonard Foner

Judge

Leonard Foner is the software architect and security lead for SecureDNA, with a research background in cryptography, distributed systems, and privacy-protecting technologies.

Lucas Boldrini

Judge

Lucas Boldrini is a Technical Consultant at IBBIS, where he supports global biosecurity efforts through DNA synthesis screening and dual-use governance. He has led partnerships and contributed to key components of the Common Mechanism, an open-source tool for identifying potentially hazardous genetic sequences. He is a former Team Experience Manager at the iGEM Foundation, where he managed the worlds largest synthetic biology competition. Lucas holds a Masters in Biophysics from the Barcelona Institute of Science and Technology.

Manu Shivakumara

Judge

Manu Shivakumara is a Senior Bioinformatics Engineer at IBBIS, where he supports the development of the Common Mechanism, an open-source platform for DNA synthesis screening. He holds a Ph.D. in Genomics and an M.Sc. in Bioinformatics and Biotechnology, with over eight years of experience analyzing high-dimensional genomic data across diverse taxa. He has authored 20+ peer-reviewed publications in metagenomics, population genomics, and comparative genomics, including in Nature and Science, with over 800 citations.

Max Görlitz

Judge

Max works as a Policy Officer at DG HERA at the European Commission, focusing on R&D funding for innovative countermeasures against pandemics. Previously, he worked on far-UVC research at SecureBio and studied genomics and medicine in Oxford and Munich.

Moritz Hanke

Judge

Dr. Moritz S. Hanke is a Fellow at the Johns Hopkins Center for Health Security, where he focuses on high-consequence biological risks from AI models and nucleic acid synthesis security. Based in Brussels, he engages in European, transatlantic, and international biosecurity policy discussions. He was previously an Ending Bioweapons Fellow at the Council on Strategic Risks and worked on early pathogen detection at SecureBio. He is a licensed medical doctor trained at the Charite Berlin and the University of Tubingen.

Nelly Mak

Judge

Nelly is a Research Scientist on the SecureBio AI team, where she works on AIxBio evals and building safeguards against AI-enabled biorisks. She holds a PhD focused on the natural hosts of zoonotic viruses and brings extensive wet-lab virology expertise to her work.

P. T. Nhean

Judge

P.T. Nhean is the Director of Biosecurity at AI Safety Asia (AISA), where they lead research and policy work at the intersection of artificial intelligence and biological risk across Asia. Based in Singapore, P.T. has contributed to AI-biosecurity governance at national and regional levels, including advising on nucleic acid screening for sequences of concern and leading a study on AI applications in engineering biology across Southeast Asia, South Asia, and the UK. She also worked on epidemiological modeling focusing on influenza with the National University of Singapore, co-drafted the Youth for Health declaration with the WHO European Region, and co-founded Southeast Asia Biosecurity (SEA Bio).

Piers Millett

Judge

Piers D. Millett, Ph.D. is Executive Director of the International Biosecurity and Biosafety Initiative for Science (IBBIS). He was previously Deputy Head of the Implementation Support Unit for the Biological Weapons Convention, where he worked for over a decade, and Vice President for Responsibility at iGEM Foundation. Trained as a microbiologist, he has consulted for the WHO and holds a Ph.D. in International Relations.

Ramanpreet Singh Khinda

Judge

Ramanpreet Singh Khinda is a Staff Software Engineer at LinkedIn and an IEEE Senior Member. He is an international speaker, hackathon judge, and mentor with experience at Google and Snap.

Rassin Lababidi

Judge

Rassin Lababidi is a technical lead at IBBIS working on developing nucleic acid screening standards. He is focused on moderating the Sequence Biosecurity Risk Consortium, evaluating the quality of sequence screening systems, and the responsible access of AI-enabled biological design tools. He has a background in infectious diseases and immunology and has worked in biosecurity and pandemic preparedness for several years.

Sana Zakaria

Judge

Director of Emerging Technologies and Resilience at RAND Europe. Research spans biosecurity policy, pandemic preparedness, and AI-biotech convergence. Works with NATO, CEPI, WHO, and the BWC. PhD in molecular and neurobiology from King's College London.

Stephen Turner

Judge

Stephen D. Turner, Ph.D. is an Associate Professor of Data Science and Assistant Dean for Research at the University of Virginia School of Data Science. Stephen research spans public health, computational genomics, and AI+biosecurity. Stephen previously held roles at Colossal Biosciences, and at Signature Science, where he worked in biosecurity for national security and defense.

Vinoo Selvarajah

Judge

Vinoo Selvarajah is the former VP of Technology at the iGEM Foundation with a long history of working with teams, projects, and education centered on DNA parts. He focuses on synthetic biology technologies and infrastructure that benefit developing bioeconomies, and how to ensure these are distributed and accessible so the global bioeconomy is inclusive and safe.

Zachary Kallenborn

Judge

Zachary Kallenborn is a PhD candidate in Risk Analysis at King's College London researching risk and uncertainty with topical focuses on global catastrophes, drone warfare, critical infrastructure, WMD, and apocalyptic terrorism. He is also affiliated with the University of Oxford, the Center for Strategic and International Studies, George Mason University, and the National Institute for Deterrence Studies.

Zuzanna Matuszewska

Judge

AI biosecurity evaluations researcher at EquiStamp, building capability assessments for biological domains. Holds an MD and is completing a BSc in Mathematics at the University of Warsaw. Active in the biosecurity and effective altruism communities in Poland and Europe.

Ahmed Tijani Akinfalabi

Judge

Ahmed Tijani Akinfalabi is finishing his Master's in Bioinformatics at the University of Potsdam, working at the intersection of machine learning and systems biology. His research covers RNA-Seq analysis, genome annotation, and gene regulatory network inference, and he has built computer vision pipelines with Detectron2 and YOLOv8 for biological imaging on HPC systems.

Miti Saksena

Judge

Miti Saksena, MBBS, MSc, is a physician-scientist working at the intersection of biosecurity, health AI, and clinical development. She served in NYC's initial COVID-19 response, ran postdoctoral mRNA vaccine immunology research at Mount Sinai, and contributed to accelerated vaccine trials at Alvea. She has consulted on metagenomic sequencing and Far-UVC pathogen mitigation, worked independently on DNA synthesis screening tools, facilitated BlueDot biosecurity courses since 2024, and served as a physician expert evaluator on OpenAI's HealthBench. She currently consults on IVF automation. Her research interests are AI applications in biosecurity and respiratory virus transmission suppression

Speakers & Collaborators

Kevin Esvelt

Keynote

Kevin M. Esvelt is an associate professor at the MIT Media Lab, where he directs the Sculpting Evolution Group. In 2013, he was the first to identify the potential for CRISPR gene drive systems to alter wild populations, and he broke with scientific tradition by calling for open discussion and safeguards before any lab demonstrated the technology. His current work focuses on catastrophic biorisk mitigation: he co-founded SecureDNA, a cryptographic platform for universal DNA synthesis screening, and helped develop the Nucleic Acid Observatory, a global early-warning system for biological threats.

Oliver Crook

Keynote

Dr. Oliver Crook is an MRC Career Development Award Fellow and Group Leader at the Kavli Institute for NanoScience Discovery, University of Oxford, and a Todd-Bird Junior Research Fellow at New College. His group develops computational and statistical methods drawing on Bayesian machine learning, structural biology, and AI interpretability, with applications in molecular parasitology, protein design, and biosecurity. Before starting his group in 2025, he was seconded to the UK Government's AI Safety Institute to lead a protein design team.

Jaime Yassif

Keynote

Dr. Jaime Yassif is Senior Advisor for Global Biological Policy and Programs at NTI | bio, where she focuses on governance and safeguards at the intersection of AI and the life sciences. She previously served as Vice President of NTI | bio, overseeing the organization's work to reduce global catastrophic biological risks, and before that as the inaugural Biosecurity and Pandemic Preparedness Program Officer at Open Philanthropy, where she directed approximately $40 million in grants that helped build the modern biosecurity field. Dr. Yassif holds a Ph.D. in Biophysics from UC Berkeley and has advised the U.S. Department of Defense and Department of Health and Human Services on biosecurity policy.

Conor McGurk

Speaker

Conor McGurk is Chief of Staff for Biosecurity & Pandemic Preparedness at Coefficient Giving (formerly Open Philanthropy), where he works on grantmaking strategy across one of the largest philanthropic funders of global catastrophic risk work. Before joining Coefficient Giving in 2025, he co-founded and led the Safe AI Forum, an AI safety nonprofit that organized the International Dialogues on AI Safety series, bringing together Turing Award winners, former heads of state, and leading AI scientists to build consensus on global AI governance. He previously held roles in engineering management at Meta and technology strategy consulting at Deloitte, and holds a BSc in Computer Science and Philosophy from the University of British Columbia

Cassidy Nelson

Speaker

Dr. Cassidy Nelson is the Director of Biosecurity Policy at the Centre for Long-Term Resilience (CLTR), leading a team working to safeguard against pandemics and address extreme biological risks through targeted policy engagement. A dual-trained physician-scientist, she holds a DPhil in Biology from Oxford, an MPH from the University of Melbourne, and a Medical Degree from the University of Queensland. Before CLTR, she spent a decade as a clinician and biosecurity researcher at Oxford, and has advised the WHO and the Bipartisan Commission on Biodefense on health security policy.

Coleman Breen

Speaker

Coleman Breen is a Fellow at the Johns Hopkins Center for Health Security and a Senior AI Policy Researcher at SecureBio, where he works on biosecurity-relevant AI evaluations and policy. He is a PhD candidate in Statistics at UW-Madison, with research focused on the statistical evaluation of genetic sequence machine learning models. His background spans computational biology, AI, and a stint as an AI resident at Google[x] working on agricultural biotechnology.

Gene Olinger

Speaker

Gene Olinger, PhD, MBA, is the Director of the Galveston National Laboratory and Professor of Microbiology and Immunology at the University of Texas Medical Branch, where he holds the John Sealy Distinguished University Chair in Tropical and Emerging Virology. He brings over 20 years of BSL-3/4 research experience, with a focus on vaccine and therapeutic development for Ebola and other high-consequence emerging pathogens. Before joining UTMB, he served at USAMRIID and NIH NIAID, where he was a key contributor to the development of ZMapp, the Ebola treatment deployed during the West Africa outbreak.

James Black

Speaker

Dr. James Black is a Contributing Scholar at the Johns Hopkins Center for Health Security, where he focuses on technical assessments of AI-enabled biological risks. He trained as a medical doctor at the University of Oxford and Imperial College London, earned his PhD in computational cancer biology from University College London, and completed a postdoctoral fellowship at the Francis Crick Institute.

Jonas Sandbrink

Speaker and Judge

Jonas Sandbrink is Entrepreneur-in-Residence at Sentinel Bio, where he works on researcher credentialing for access to bio-capable AI systems. Previously at the UK AI Security Institute, where he founded the chem/bio evaluations team, the first government program anywhere to assess whether frontier AI models could enable biological or chemical misuse. Before AISI, he advised the UK Cabinet Office and Google DeepMind on biosecurity. He holds a DPhil from Oxford's Nuffield Department of Medicine, where his research examined misuse risks from synthetic virology and AI.

Steph Guerra

Speaker

Dr. Steph Guerra is a molecular biologist and biosecurity expert serving as Senior Biosecurity Research Resident and AIxBio Lead at the RAND Center on AI, Security, and Technology, where she researches the convergence of AI and biotechnology to advance security and the public good. Prior to RAND, she was Head of Strategic Partnerships at the U.S. AI Safety Institute at NIST, where she led efforts to evaluate the national security risks of advanced AI systems, and earlier worked at the White House Office of Science and Technology Policy on biodefense strategy and policy. She holds a PhD in biological and biomedical sciences from Harvard University.

Alex Kleinman

Speaker

Alex Kleinman is Co-founder and Director of Operations at Active Site (formerly Panoplia Labs), a research nonprofit measuring AI's impact on biosecurity risks. He co-led the wet-lab RCT on whether LLMs provide biological uplift to novices, contributed to ABC-Bench (an agentic biosecurity benchmark presented at NeurIPS 2025), and co-authored a study on antibiotic and vaccine countermeasures against mirror bacteria. Before Active Site, he was on the science team at Alvea, working on broad-spectrum vaccines.

Jasper Götting

Judge

Jasper Götting is Head of AI Research at SecureBio where his work covers aspects of understanding, measuring, and mitigating the effects of AI progress on biological risks. Before joining SecureBio, Jasper worked on airborne transmission suppression at Convergent Research and pathogen-agnostic diagnostics for the Ellison Institute of Technology Oxford. He holds a Masters in Biomedical Science and a PhD in Virology from the Hannover Medical School.

Jason Hoelscher-Obermaier

Organizer and Judge

Director of Research at Apart Research. Ph.D. in physics from Vienna. Focuses on AI safety evaluations, interpretability, and alignment.

Grace Braithwaite

Organizer

Dr. Grace Braithwaite is Co-Founder and Director of the Cambridge Biosecurity Hub (cambiohub.org), where she leads the organisation's work building the biosecurity research community in Cambridge and beyond. A medical doctor by training, she completed two years of NHS Foundation training across General Surgery, Cardiology, Endocrinology, A&E and General Practice, alongside a year as a part-time locum. She holds an MBChB and a Master of Public Health (Merit) from the University of Sheffield, with field research on health-system resilience after the 2015 Nepal earthquake and on child nutrition programmes in Bangladesh with World Vision.

Joshua Landes

Organizer

Joshua Landes leads Community & Training at BlueDot Impact, where he runs the AISF community and facilitates AI Governance and Economics of Transformative AI courses. Previously, he worked at AI Safety Germany and the Center for AI Safety, after managing political campaigns for FDP in Germany.

Jack Burgess

Organizer and Judge

Dr. Jack Burgess completed his Ph.D. in Neural Computation at Carnegie Mellon University in 2025 before moving to Berlin, Germany. Having worked broadly on scientific applications of AI and machine learning throughout his academic training, as well as having published in the area of computational biology, he is delighted to be leading the Berlin hub's local AIxBio Hackathon. He is currently on the lookout for new opportunities: reach out if your team is growing!

Akshay Iyer

Judge and Co-organizer

CS and Entrepreneurship at Columbia University, IIT Bombay alum. Research experience in neuromorphic engineering and federated learning. Apart Research judge and internal organizer.

Adam Howes

Judge

Adam Howes is a statistician currently working with CDC CFA on epidemic surveillance and Active Site on studies evaluating AI assistance for biological tasks. He holds a PhD in statistics from Imperial College London

Alex Norman

Judge

Alex completed his PhD in Interdisciplinary Bioscience at Oxford and has over 7 years of experience in immunology, bioinformatics and virology. He has worked on broad-spectrum antivirals and vaccines as well as performed epidemiology work during COVID-19. He has worked across biosecurity disciplines on technical projects with Sentinel Bio, SecureBio and the Mirror Biology Dialogues.

Andrew Liu

Judge

Andrew is a Senior Research Scientist at SecureBio, where he has led or contributed to six AI biosecurity benchmarks including those used by OpenAI, Anthropic, and Google DeepMind. Previously, he earned his PhD in computational biology at Harvard Medical School and his BA/MS in math and computer science at Harvard, and has published scientific papers on early pandemic detection, DNA synthesis screening, and genetic engineering attribution.

Andy Morgan

Judge

Andy is a senior policy advisor at the Ministry of Business, Innovation and Employment in New Zealand. His role focuses on the regulation of biotechnology and he has recently represented the New Zealand government internationally on the topic of nucleic acid synthesis screening. Andy was an ERA AIxBio Research Fellow and recently launched the AIxBio Research Hub to help new researchers familiarize themselves with the field.

Benjamin Fefferman

Judge

Benjamin is a technical AI safety researcher, and is compelled to apply his interdisciplinary knowledge of computer science, biology, and chemistry to ensuring that the AI revolution is beneficial for humanity. Since his graduate studies in bioinformatics and genomics at Harvard, and machine learning research at MIT, he has led research pertaining to DNA synthesis screening at the AIxBiosecurity fellowship in Cambridge, UK.

Bryan Tegomoh

Judge

Bryan Tegomoh, MD, MPH, is a physician and epidemiologist with experience in AI, biosecurity, and public health. Author of the Biosecurity Handbook: Biological Security in the AI Era. He led the investigation of the first reported U.S. Omicron variant cluster (early warning system) and has served as a WHO Technical Consultant on Epidemic and Pandemic Preparedness. He works on AI safety in biosecurity and responsible AI in medicine and public health.

Chris Stamper

Judge

Chris is a biosecurity researcher focused on reducing risks from catastrophic biological threats, particularly novel threats emerging from the overlap of AI, biotechnology, and synthetic biology. He brings deep hands-on expertise in immunology, virology, computational biology, and machine learning to evaluating frontier AI capabilities, modeling novel biosecurity threats, and building the field of AIxBio. He has a PhD in immunology from the University of Chicago.

Christine Parthemore

Judge

Christine Parthemore is Director of CSR's Janne E. Nolan Center on Strategic Weapons at the Council on Strategic Risks, where she previously served as CEO for six years and was a founding Board Member. Her current work covers issues in biosecurity and biodefense, countering weapons of mass destruction, innovation and technology trends in national security, and more. She also serves on the Board of SecureBio. Among her prior roles, Christine served as the Senior Advisor to the Assistant Secretary of Defense for Nuclear, Chemical, and Biological Defense Programs in the U.S. Department of Defense.

Elian Belot

Judge

Elian Belot is a founding engineer at Serova Bio, where he builds AI and computational biology systems for personalized cancer vaccines. He previously worked on gene editing and protein design.

Elise Racine

Judge

Elise Racine is a Research Manager at MATS and a PhD candidate and Clarendon Scholar at the University of Oxford, affiliated with the Institute for Ethics in AI and the Ethox Centre. Her work focuses on AI x biosecurity risks, including AI misuse in biological threat development and the governance of dual-use systems. She has contributed to pandemic surveillance and response efforts across multiple capacities and has advised organizations such as the United Nations and U.S. government agencies on issues spanning AI safety, global health security, and policy/governance.

Eva Siegmann

Judge

Eva Siegmann is a Visiting Fellow at the Council on Strategic Risks, where she works on international biodefense initiatives and US biosecurity policy. She previously worked as a Political Affairs Intern at the Biological Weapons Convention Implementation Support Unit in Geneva and at several research institutes, including the Institute for Peace Research and Security Policy at the University of Hamburg, the Fondation pour la Recherche Stratégique and the Nuclear Threat Initiative. She holds an MA in Security Studies from Georgetown University and a Double BA in social and political science from Sciences Po Paris and the Free University of Berlin.

Evan Fields

Judge

Evan Fields is a Research Scientist at SecureBio, where he works on pathogen detection and pandemic early warning systems. He was recently selected for the 2025-26 Fellowship for Ending Bioweapons Programs at the Council on Strategic Risks. Before SecureBio, he was VP of Engineering and Data Science at Zoba, an urban mobility software company, where he had previously led the data science team. He holds a PhD in Operations Research from MIT, with research on estimating latent demand for urban mobility services, and a BA in Mathematics and Computer Science from Pomona College.

Gary Ackerman

Judge

Dr. Gary Ackerman is the CEO and Founder of Nemesys Insights. He has over two decades of expertise in strategic forecasting, risk assessment and human behavior modeling. He concurrently serves as a Professor of Homeland Security at the University at Albany, SUNY. Much of his recent work has applied his red teaming and risk evaluation methodologies to explore risk at the intersection of AI and CBRN, conducting over a dozen risk evaluations and mitigations of frontier AI models in these domains.

Hanna Palya

Judge

Hanna is a PhD student in mathematical epidemiology, working on developing syndromic surveillance into an early warning system for outbreaks with the UK Health Security Agency and the University of Warwick. She is also a research fellow at Fourth Eon Bio, working on function-based sequence screening. In the past three months, she worked on AI-bio threat modelling as a seasonal fellow at GovAI. In the past year, she helped build a free KYC-tool for DNA providers and scoped a defense-funded R&D programme for function-based sequence screening at CLTR.

Hayley Severance

Judge

Hayley Anne Severance is the deputy vice president for NTI's Global Biological Policy and Programs team, bringing 15+ years of experience in biosecurity, biological threat reduction, and global health security policy. Prior to NTI, she developed strategic policy for the Department of Defense's Cooperative Biological Engagement Program and advanced the U.S. commitment under the Global Health Security Agenda. She holds an MPH in Infectious Disease Epidemiology from George Washington University and is an alumna of the Emerging Leaders in Biosecurity Initiative Fellowship.

Ian Beatty

Judge

Ian Beatty, Associate Professor of Physics at UNC Greensboro, has a long history of exploring and teaching computational modeling. He recently pivoted into biosecurity R&D and completed a research fellowship in the ERA AIxBio program in Cambridge, England. He is currently working on DNA synthesis screening.

Ihor Kendiukhov

Judge

Ihor is a researcher at the intersection of AI Safety and AI for biology. He is the founder of BiodynAI, a project to apply mechanistic interpretability to biological foundation models. He is also research lead at AI Safety Camp and SPAR.

Jack Douglass

Judge

Jack is a computational biologist and undergraduate at the University of Arizona. Currently, he designs molecular diagnostics at Strive Bioscience, an early-stage startup. Hes built bioinformatics software for SecureBio and worked on synthesis screening standards as an ERA Fellow. His research interests include molecular diagnostics, function-based synthesis screening, and transmission suppression technologies.

Joey Bream

Judge

Joey Bream is the Founder and CEO of Bream Design, a software agency that builds backend systems for charities. He also founded the Automation Builders Club, which runs coding events in London. Previously, he spent two years as Operations Assistant at the Krueger AI Safety Lab (KASL) at the University of Cambridge, one of the UK's most influential AI safety research groups. He holds a Master of Engineering in Energy Sustainability and the Environment from Cambridge, where his master's project developed a data model for optimising carbon emissions.

John O'Brien

Judge

John "JT" OBrien is the Associate Director of Research at the Atlantic Councils Bipartisan Commission on Biodefense and a DPhil candidate in mathematical biology at the University of Oxford. His work focuses on the critical intersection of artificial intelligence, biosecurity, and the life sciences, bridging the gap between scientific innovation and national policy.

John Wang

Judge

Ph.D. from Harvard. Chief Scientific Officer at TwentyTwo. Research focuses on AI-biosecurity, viral evolution, and developing interpretable machine learning tools for biological applications.

Keri Warr

Judge

Keri is a Security Engineering Manager at Anthropic, where hes spent over three years helping build the security organization from the ground up. He also advises external security researchers developing AI tools to secure the open source supply chain and has published research on methods for protecting model weights from sophisticated adversaries.

Kevin Flyangolts

Judge

Kevin Flyangolts is Founder and CEO of Aclid, a biosecurity and biosafety platform. Leading life sciences institutions use Aclids platform for denied party screening, KYC, trade compliance, and risk intelligence for complex, obfuscated, and AI-generated designs. Building on its commercial screening technology, Aclid also works with US and UK governments in early-warning, agnostic biothreat intelligence including handheld and deployed, continuous sequencing.

Laura Bailey

Judge

Laura is completing her PhD in Medical Sciences at the University of Oxford, where her research examines the regulation of innate immunity. During her PhD, she gained policy experience through an internship at the Academy of Medical Sciences. Previously, she completed an integrated Masters in molecular and cellular biochemistry, also at Oxford, researching bacterial genomics and virulence. She is now pivoting into biosecurity policy, focusing on how AI is shaping the life sciences and how progress trades off against risk. Most recently she was a Winter 2026 ERA AIxBio Fellow, researching AI-enabled biosurveillance.

Lee M. Wall

Judge

Alumnus of the ERA AIxBio Fellowship, where he researched tamper-resistant safeguards for open-weight biological foundation models. 3+ years of experience in data science for CBRN risk assessment.

Lennart Justen

Judge

Lennart Justen is a PhD candidate at MIT and the Broad Institute where he is co-advised by Kevin Esvelt and Pardis Sabeti. His work focuses on biosecurity and pandemic preparedness, with a particular focus on pandemic early warning systems and safeguards for dual use AIxBio systems. He has previously worked on biosecurity at SecureBio, the Council on Strategic Risks, and the U.S. State Department.

Leonard Foner

Judge

Leonard Foner is the software architect and security lead for SecureDNA, with a research background in cryptography, distributed systems, and privacy-protecting technologies.

Lucas Boldrini

Judge

Lucas Boldrini is a Technical Consultant at IBBIS, where he supports global biosecurity efforts through DNA synthesis screening and dual-use governance. He has led partnerships and contributed to key components of the Common Mechanism, an open-source tool for identifying potentially hazardous genetic sequences. He is a former Team Experience Manager at the iGEM Foundation, where he managed the worlds largest synthetic biology competition. Lucas holds a Masters in Biophysics from the Barcelona Institute of Science and Technology.

Manu Shivakumara

Judge

Manu Shivakumara is a Senior Bioinformatics Engineer at IBBIS, where he supports the development of the Common Mechanism, an open-source platform for DNA synthesis screening. He holds a Ph.D. in Genomics and an M.Sc. in Bioinformatics and Biotechnology, with over eight years of experience analyzing high-dimensional genomic data across diverse taxa. He has authored 20+ peer-reviewed publications in metagenomics, population genomics, and comparative genomics, including in Nature and Science, with over 800 citations.

Max Görlitz

Judge

Max works as a Policy Officer at DG HERA at the European Commission, focusing on R&D funding for innovative countermeasures against pandemics. Previously, he worked on far-UVC research at SecureBio and studied genomics and medicine in Oxford and Munich.

Moritz Hanke

Judge

Dr. Moritz S. Hanke is a Fellow at the Johns Hopkins Center for Health Security, where he focuses on high-consequence biological risks from AI models and nucleic acid synthesis security. Based in Brussels, he engages in European, transatlantic, and international biosecurity policy discussions. He was previously an Ending Bioweapons Fellow at the Council on Strategic Risks and worked on early pathogen detection at SecureBio. He is a licensed medical doctor trained at the Charite Berlin and the University of Tubingen.

Nelly Mak

Judge

Nelly is a Research Scientist on the SecureBio AI team, where she works on AIxBio evals and building safeguards against AI-enabled biorisks. She holds a PhD focused on the natural hosts of zoonotic viruses and brings extensive wet-lab virology expertise to her work.

P. T. Nhean

Judge

P.T. Nhean is the Director of Biosecurity at AI Safety Asia (AISA), where they lead research and policy work at the intersection of artificial intelligence and biological risk across Asia. Based in Singapore, P.T. has contributed to AI-biosecurity governance at national and regional levels, including advising on nucleic acid screening for sequences of concern and leading a study on AI applications in engineering biology across Southeast Asia, South Asia, and the UK. She also worked on epidemiological modeling focusing on influenza with the National University of Singapore, co-drafted the Youth for Health declaration with the WHO European Region, and co-founded Southeast Asia Biosecurity (SEA Bio).

Piers Millett

Judge

Piers D. Millett, Ph.D. is Executive Director of the International Biosecurity and Biosafety Initiative for Science (IBBIS). He was previously Deputy Head of the Implementation Support Unit for the Biological Weapons Convention, where he worked for over a decade, and Vice President for Responsibility at iGEM Foundation. Trained as a microbiologist, he has consulted for the WHO and holds a Ph.D. in International Relations.

Ramanpreet Singh Khinda

Judge

Ramanpreet Singh Khinda is a Staff Software Engineer at LinkedIn and an IEEE Senior Member. He is an international speaker, hackathon judge, and mentor with experience at Google and Snap.

Rassin Lababidi

Judge

Rassin Lababidi is a technical lead at IBBIS working on developing nucleic acid screening standards. He is focused on moderating the Sequence Biosecurity Risk Consortium, evaluating the quality of sequence screening systems, and the responsible access of AI-enabled biological design tools. He has a background in infectious diseases and immunology and has worked in biosecurity and pandemic preparedness for several years.

Sana Zakaria

Judge

Director of Emerging Technologies and Resilience at RAND Europe. Research spans biosecurity policy, pandemic preparedness, and AI-biotech convergence. Works with NATO, CEPI, WHO, and the BWC. PhD in molecular and neurobiology from King's College London.

Stephen Turner

Judge

Stephen D. Turner, Ph.D. is an Associate Professor of Data Science and Assistant Dean for Research at the University of Virginia School of Data Science. Stephen research spans public health, computational genomics, and AI+biosecurity. Stephen previously held roles at Colossal Biosciences, and at Signature Science, where he worked in biosecurity for national security and defense.

Vinoo Selvarajah

Judge

Vinoo Selvarajah is the former VP of Technology at the iGEM Foundation with a long history of working with teams, projects, and education centered on DNA parts. He focuses on synthetic biology technologies and infrastructure that benefit developing bioeconomies, and how to ensure these are distributed and accessible so the global bioeconomy is inclusive and safe.

Zachary Kallenborn

Judge

Zachary Kallenborn is a PhD candidate in Risk Analysis at King's College London researching risk and uncertainty with topical focuses on global catastrophes, drone warfare, critical infrastructure, WMD, and apocalyptic terrorism. He is also affiliated with the University of Oxford, the Center for Strategic and International Studies, George Mason University, and the National Institute for Deterrence Studies.

Zuzanna Matuszewska

Judge

AI biosecurity evaluations researcher at EquiStamp, building capability assessments for biological domains. Holds an MD and is completing a BSc in Mathematics at the University of Warsaw. Active in the biosecurity and effective altruism communities in Poland and Europe.

Ahmed Tijani Akinfalabi

Judge

Ahmed Tijani Akinfalabi is finishing his Master's in Bioinformatics at the University of Potsdam, working at the intersection of machine learning and systems biology. His research covers RNA-Seq analysis, genome annotation, and gene regulatory network inference, and he has built computer vision pipelines with Detectron2 and YOLOv8 for biological imaging on HPC systems.

Miti Saksena